Intro to Large Language Models

AI for Research, ESCP, 2025-2026

2026-02-11

What does GPT do? Text completion.

Do you like poetry?

A rose is a rose is a rose

Gertrude SteinBrexit means Brexit means Brexit

John CraceElementary my dear Watson

P.G. WoodehouseThere is an easy way for the government to end the strike without withdrawing the pension reform,

Complete Text

Generative language models perform text completion

They generate plausible1 text following a prompt.

The type of answer, will depend on the kind of prompt.

GPT Playground

To use GPT-4 profficiently, you have to experiment with the prompt.

- try the Playground mode

It is the same as learning how to do google queries

- altavista:

+noir +film -"pinot noir" - nowadays: ???

“Prompting” is becoming a discipline in itself… (or is it?)

Some Examples

By providing enough context, it is possible to perform amazing tasks

What is a language model?

Language Models and Cryptography

The Caesar code

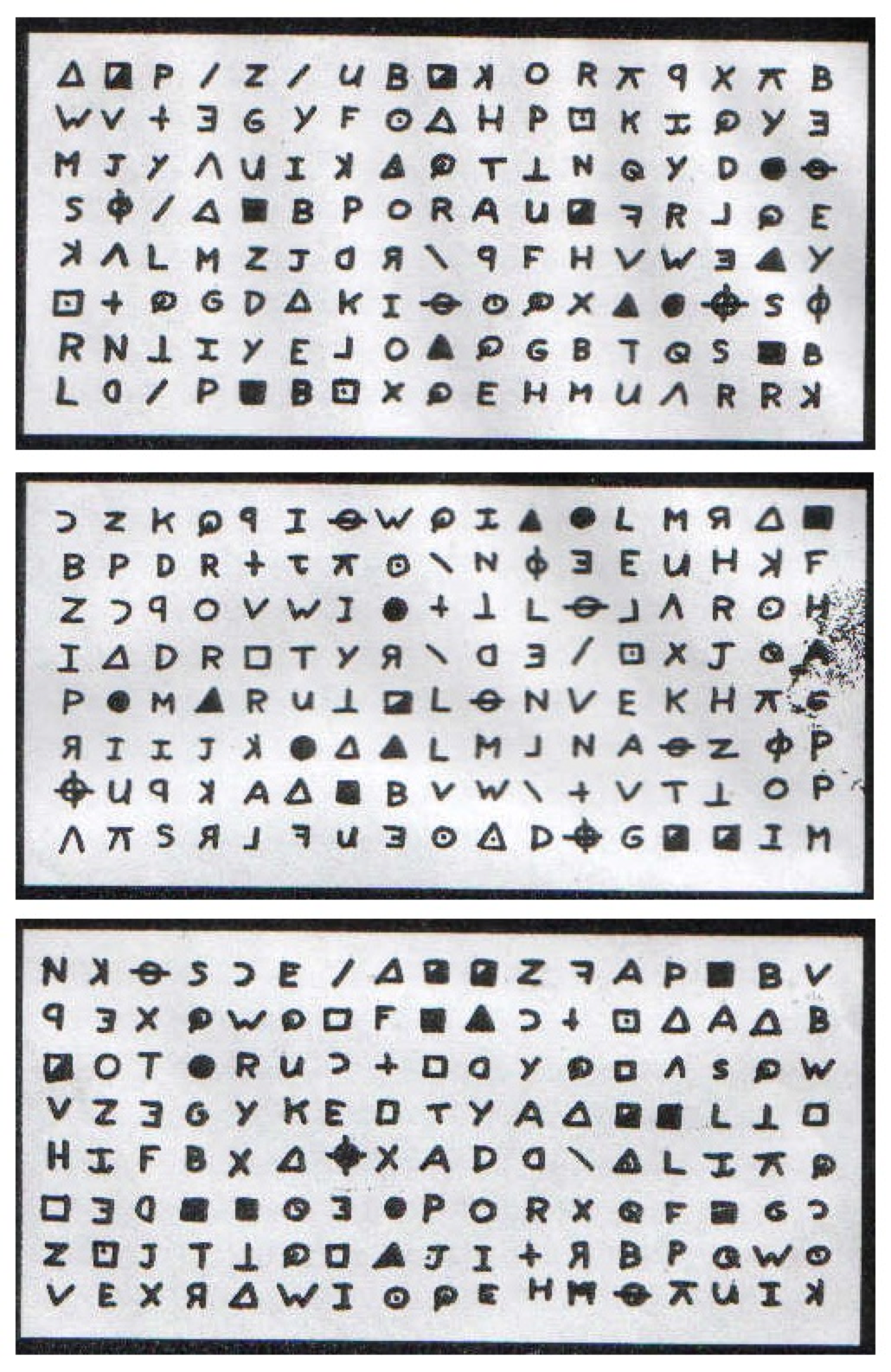

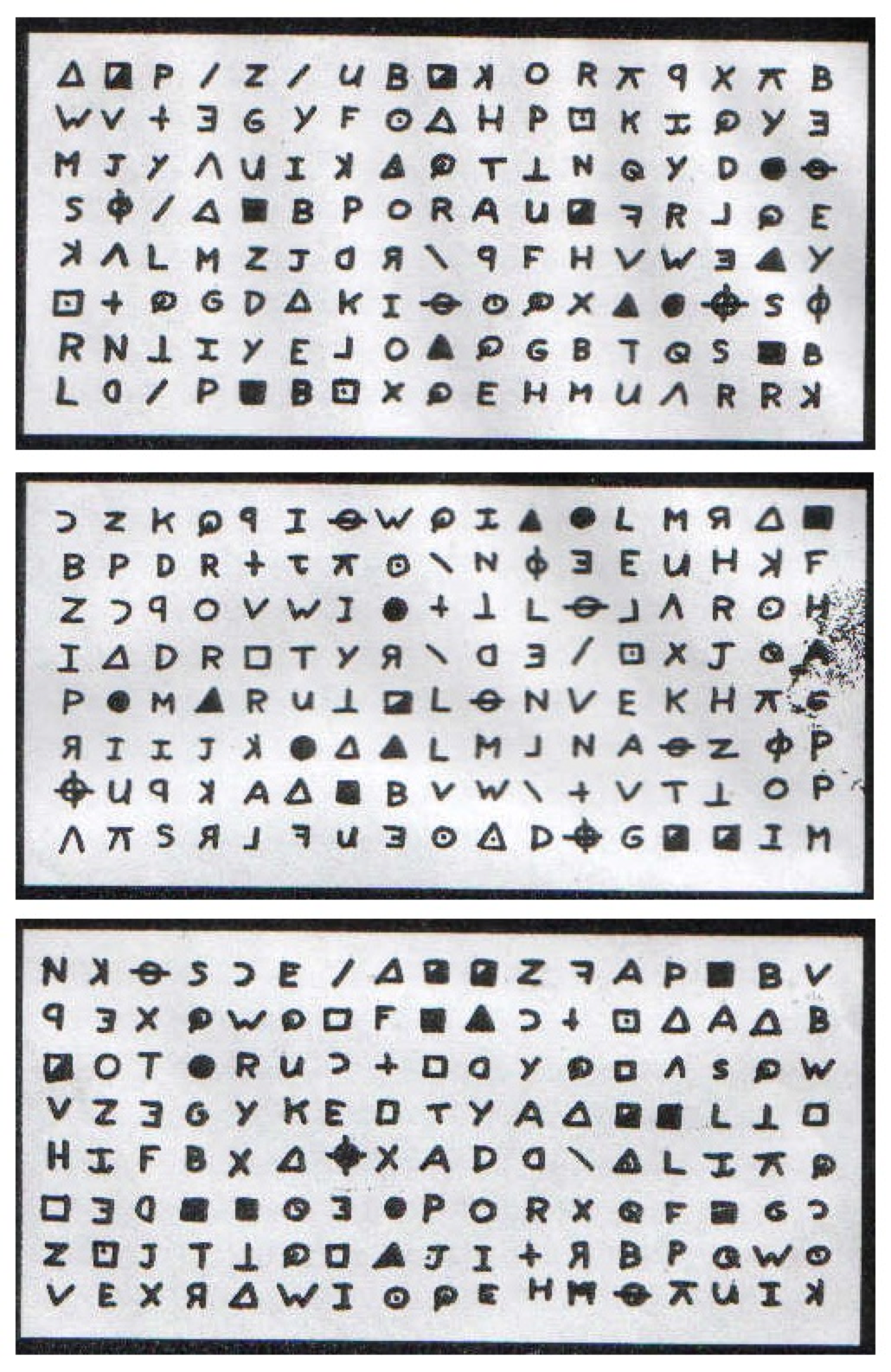

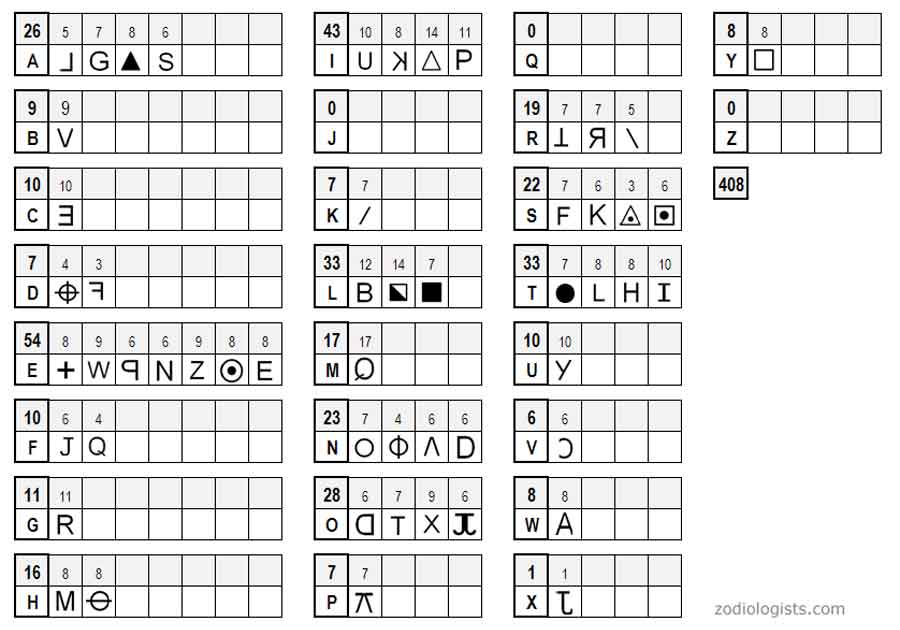

Zodiac 408 Cipher

Later in 2001, in a prison, somewhere in California

Solved by Stanford’s Persi Diaconis and his students using Monte Carlo Markov Chains

Monte Carlo Markov Chains

Take a letter \(x_n\), what is the probability of the next letter being \(x_{n+1}\)?

\[\pi_{X,Y} = P(x_{n+1}=Y, x_{n}=X)\]

for \(X=\{a, b, .... , z\} , Y=\{a,b,c, ... z\}\)

The language model can be trained using dataset of english language.

And used to determine whether a given cipher-key is consistent with english language.

It yields a very efficient algorithm to decode any caesar code (with very small sample)

MCMC to generate text

MCMCs can also be used to generate text:

- take initial prompt:

I think therefore I- last letter is

I - most plausible character afterwards is

- most plausible character afterwards is

I

- last letter is

- Result:

I think therefore I I I I I I

Not good but promising (🤷)

MCMC to generate text

Going further

- augment memory

fore I> ???

- change basic unit (use phonems or words)

An example using MCMC

- using words and 3 states

He ha ‘s kill’d me Mother , Run away I pray you Oh this is Counter you false Danish Dogges .

Big MCMC

Can we augment memory?

- if you want to compute the most frequent letter (among

26) after50letters, you need to take into account5.6061847e+70combinations !- impossible to store, let alone do the training

- but some combinations are useless:

wjai dfniDespite the constant negative press covfefe🤔

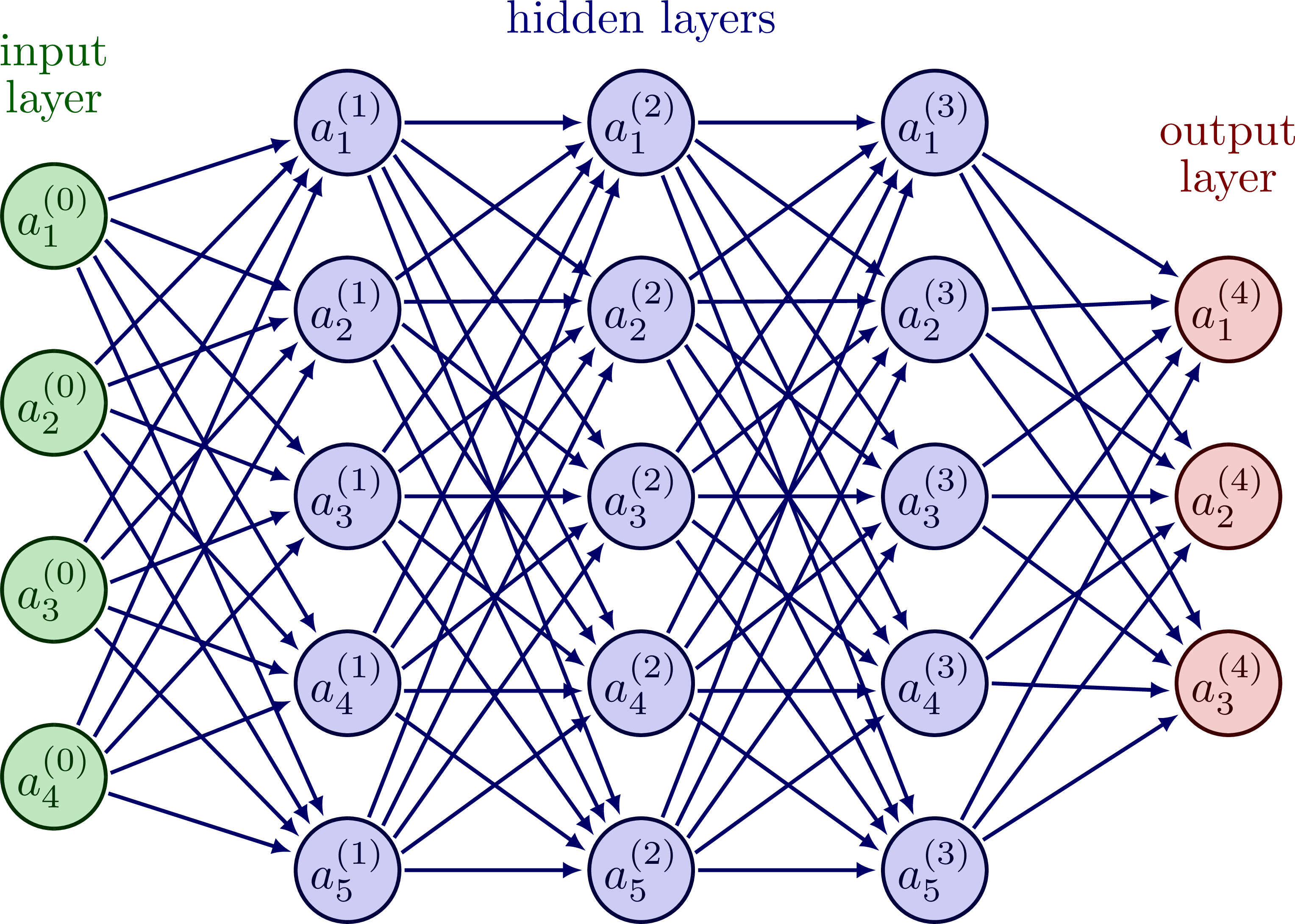

Neural Networks

- Neural networks make it possible to increase the state-space to represent

\[\forall X, P(x_n=X| x_{n-1}, ..., x_{n-k}) = \varphi^{NL}( x_{n-1}, ..., x_{n-k}; \theta )\]

with a smaller vector of parameters \(\theta\)

- Neural netowrks reduce endogenously the dimensionality.

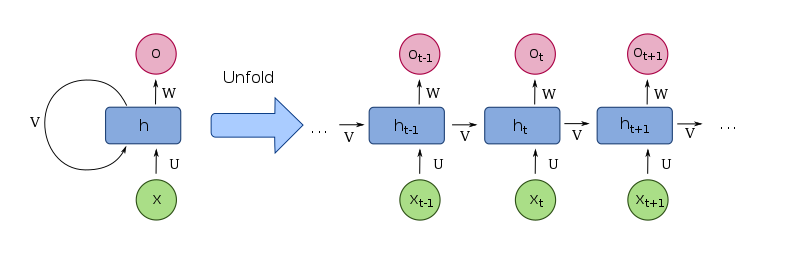

Recurrent Neural Networks

In 2015

- Neural Network reduce dimensionality of data discovering structure

- hidden state encodes meaning of the model so far

Long Short Term Memory

- 2000->2019 : Emergence of Long Short Term Memory models

speech recognition

LSTM behind “Google Translate”, “Alexa”, …

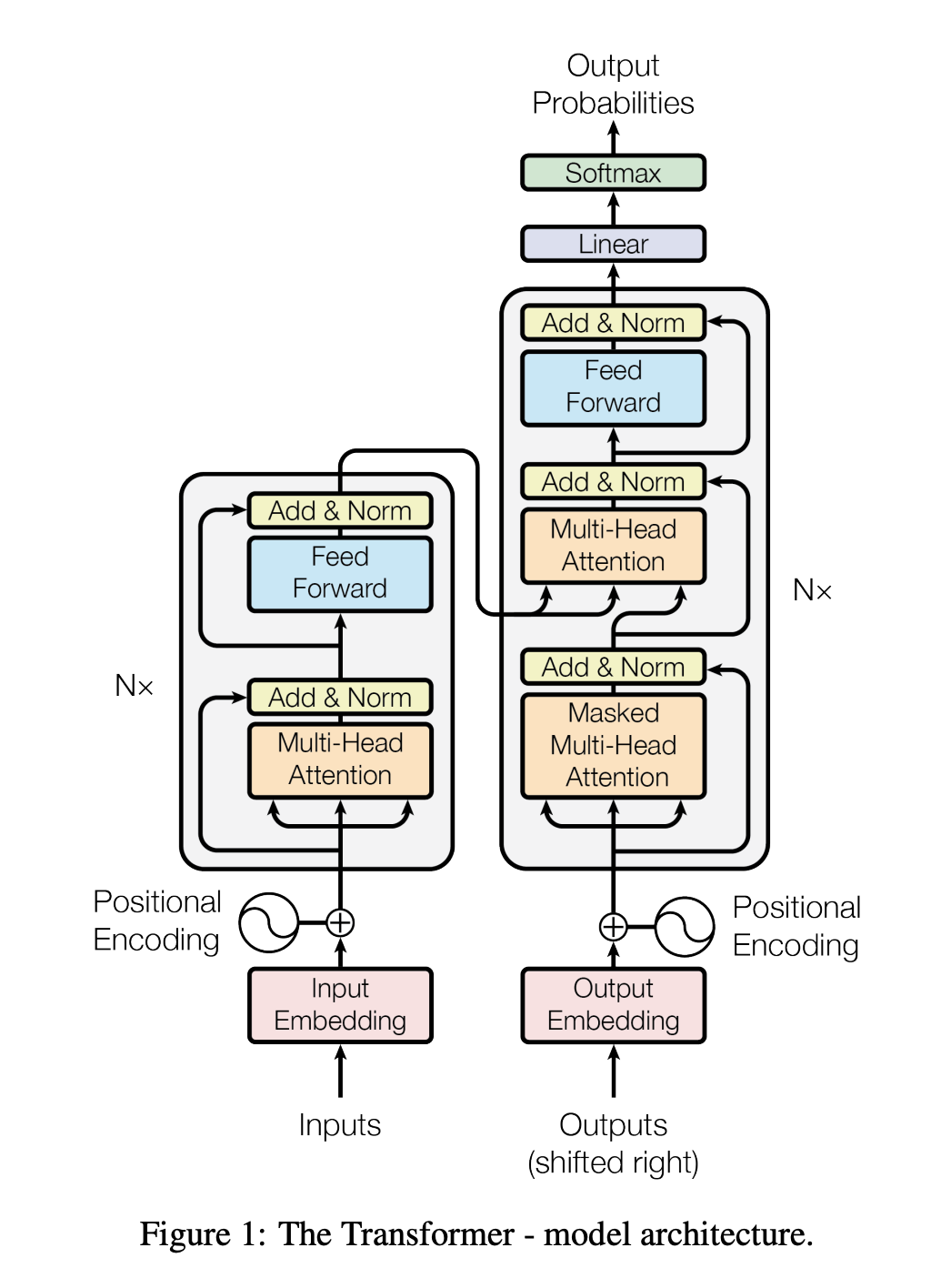

The Rise of transformers

A special kind of encoder/decoder architecture.

Most successful models since 2017

- Position Encodings

- model is not sequential anymore

- tries to learn sequence

- Attention

- Self-Attention

Encoders / Decoders (1/2)

Take some data \((x_n)\in R^x\).

Consider two functions:

- an encoder \[\varphi^E(x; \theta^E) = h \in \mathbb{R^h}\]

- a decoder: \[\varphi^D(h; \theta^D) = x' \in \mathbb{R^x}\]

What could possibly the value of training the coefficients with:

\[\min_{\theta^E, \theta^D} \left( \varphi^D( \varphi^E(x_n; \theta^E), \theta^D) - x_n\right)^2\]?

i.e. train the nets \(\varphi^D\) and \(\varphi^E\) to predict the “data from the data”? (it is called autoencoding)

Encoders / Decoders (2/2)

The relation \(\varphi^D( \varphi^E(x_n; \theta^E), \theta^D) ~ x_n\) can be rewritten as

\[x_n \xrightarrow{\varphi^E(; \theta^E)} h \xrightarrow{\varphi^D(; \theta^D)} x_n \]

When that relation is (mostly) satisfied and \(\mathbb{R}^h << \mathbb{R}^x\), \(h\) can be viewed as a lower dimension representation of \(x\). It encodes the information as a lower dimension vector \(h\) and is called learned embeddings.

- instead of \(\underbrace{x_n}_{\text{prompt}} \rightarrow \underbrace{y_n}_{\text{text completion}}\)

- one can learn \(\underbrace{h_n}_{\text{prompt (low dim)}} \xrightarrow{\varphi^C( ; \theta^C)} \underbrace{h_n^c}_{\text{text completion (low dim)}}\)

- it is easier to learn

- and perform the original task as \[\underbrace{x_n}_{\text{prompt}} \xrightarrow{\varphi^E} h_n \xrightarrow{\varphi^C} h_n^C \xrightarrow{\varphi^D} \underbrace{y_n}_{\text{text completion}}\]

This very powerful approach can be applied to combine encoders/decoders from different contexts (ex Dall-E)

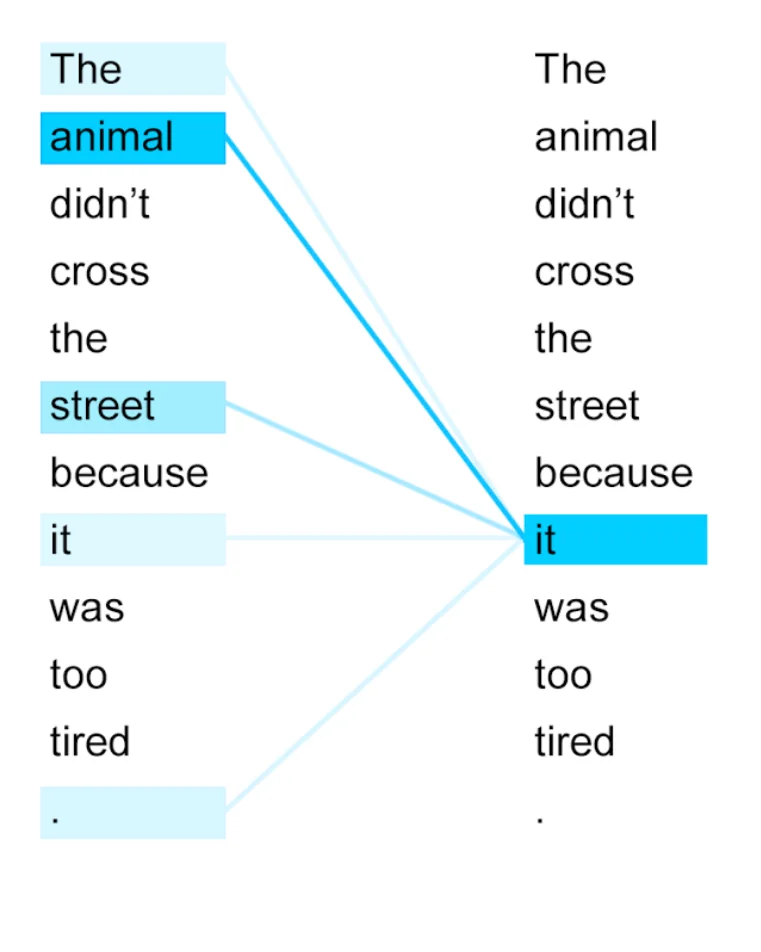

Attention

Main flaw with the recursive approach:

- the context made to predict new words/embeddings puts a lower weight on further words/embeddings

- this is related to the so-called vanishing gradient problem

With the attention mechanism, each predicted word/embedding is determined by all preceding words/embeddings, with different weights that are endogenous.

Quick summary

- Short History of Language models

- frequency tables

- monte carlo markov chains

- deep learning -> recurrent neural networks

- long-short-term memory (>2000)

- transformers (>2018)

- Since 2010 main breakthrough came through the development of deep-learning techniques (software/hardware)

- Recently, models/algorithms have improved tremendously